Latest News

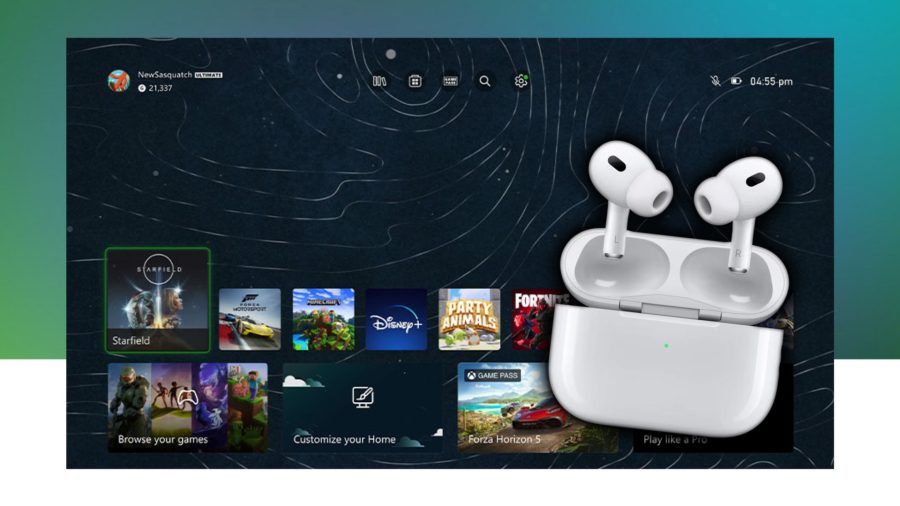

How to connect AirPods to Xbox consoles

If you’re a hardcore gamer, you’re likely using the top of the line headsets to hear every single footstep possible. However, if you’re a more casual player, maybe someone who plays from their sofa, you’ll probably want a more convenient audio solution that still offers some solid sound. Enter Apple AirPods. Most iPhone users these…