Latest News

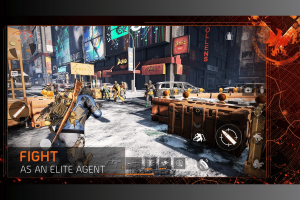

New Batman: Arkham’s Shadow comes to Meta Quest 3

Batman: Arkham’s Shadow, the latest adventure in the decade-old Batman: Arkham series, comes to the Meta Quest 3 VR headset in late 2024, according to an official post from the device maker. “Evil stalks the streets. Gotham City is in danger. And you’re the only one who can save it,” says the Oculus tagline. The…