This is not an Apple-bashing piece. It is also not an attempt to cut an American icon down to size at a time when we’re remembering the magnificent contributions of its fallen founder. This is about how failure makes us better.

I’ve lost count of the number of times I’ve heard, seen, or read comparisons of Steve Jobs to Thomas Edison since early yesterday evening. Jobs did not invent anything – not the personal computer, not the MP3 player, not the tablet. But besides that fact, there are certain other stark similarities. One: Jobs, like Edison, was a fierce competitor who sought to control not only the delivery channel for his products, but the market surrounding those products. Two: Like the finest scientist, Jobs studied his failures and Apple’s very carefully, and unlike Microsoft, built his next success upon the smoking ruins of his failures.

Readers will likely remind me that certain of the “mistakes” on this list came to fruition during the period between 1985 and 1996 when Jobs was not part of Apple. He still learned from these failures, although he was not responsible for them. No doubt he studied Apple the way a falcon studies a desert highway. NeXT is not on this list because it is not an Apple mistake. Nor, in many ways, was it a mistake at all; the NeXT computer was a marketing failure, but in hindsight, it’s obvious now that its purpose was as a lifeboat for good ideas, in which case it was a success.

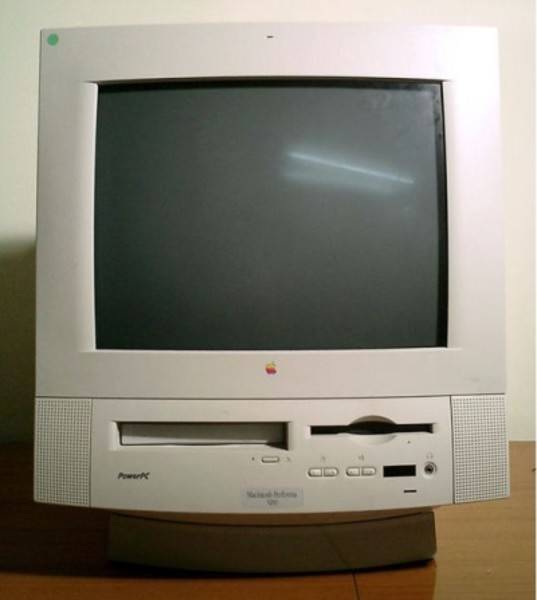

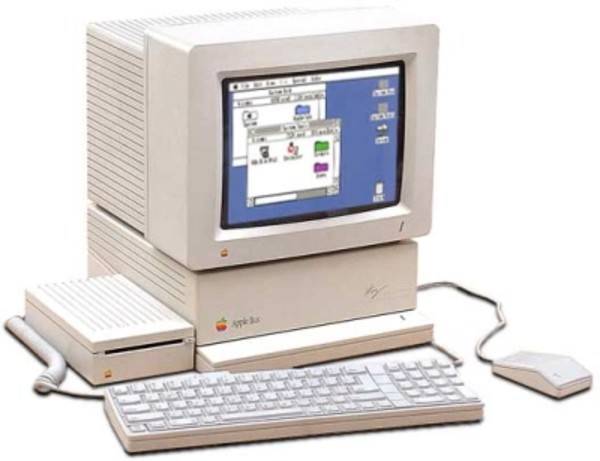

6. Macintosh Performa (1992 – 1997)

At a time when independent computer stores in the U.S. were dying, the principal sales channel for mass market computers was the big-box retail outlet. To make a dent in this channel, a manufacturer had to let the store call most of the shots. First, they wanted a clear brand distinction between “home” and “business” PCs. Never mind whether there was any real difference in the products, just as long as the branding was distinct. Second, they dictated the price points. Stores classified their customers according to how much cash they were likely to part with. Third, they had a hand in marketing. High-end retail brands were the foundations of electronics stores; they were there to make the stores look good.

The Performa product line was the antithesis of what we have come to know as the Apple philosophy. Its marketing premise, conceived under CEO John Sculley and perpetuated under his successor, Michael Spindler, was that if you used a Macintosh at work, then certainly you’d want your own Mac at home. That fact implied that Macintosh was not one thing – one concept with a few simple variations – but rather a brand stamped onto a product, like Plymouth or Hotpoint. But also, to Performa’s own detriment, it demonstrated that the difference between a premium business computer and a “value” home computer boiled down to replacing the aluminum cover with cheap plastic. Performa chassis were literally stripped down versions of Mac business models with cheaper plastic bolted on, the cost savings alone justifying Performa’s entire existence.

Although Performa did indeed contribute to an overall rebound in Mac market share, it did so at the expense of consumers’ belief in Apple as anything more than a logo. It did not endear Macintosh to the general public; worse, as the Windows 95 era dawned, it contributed to the notion that whatever innovation Mac had planned left the company with Steve Jobs.

5. Apple IIgs (1986)

There was never a particularly bad Apple II computer, and in retrospect, the IIgs was not bad at all at being an Apple II. The problem with this machine is that it tried to be something else at the same time: a kind of Macintosh-ish color system that used a mouse and did things like a Mac, with a switch that let it go back to behaving like a 6502-based Apple II.

The Apple II software base had more titles than any other platform for most of its existence (the Commodore 64 would later claim that prize, but few great C64 programs were applications). Many third-party developers had created point-and-click layers for models IIe and IIc that let users launch programs from Finder-style windows. But that wasn’t the same as making applications respond to mouse input. Backward compatibility mandated that most apps defaulted to the II’s classic 40-column graphics mode, which was too slow for mouse pointers. As long as 80-column full-color text-and-graphics required new hardware anyway, Apple gambled that users would accept that capability in a new computer that embraced the old computer without extending it.

Existing Apple II users did accept it, but not in large numbers. Although the IIgs lived up to Apple’s standards for quality, it could not attract new users who had not made up their minds… because that market did not exist. Apple loyalists who wanted graphical computing purchased Macs, even though they were sacrificing color to do so. The rest needed the device that ran their applications (DOS machines) or their games (C64). The lesson learned here was that you cannot migrate your customer base from one platform to another by creating a third one in-between and leveraging it like a pontoon bridge.

4. Macintosh (1984)

No, not the Mac as a whole, not Mac as a platform. The first Macintosh, the 128K edition, warts and all.

Completely new devices that successfully revolutionize a market cannot be half-baked, like the Edsel or New Coke. They must do everything that a customer can reasonably imagine can be done with it, and not halfway. Not in the future, not “coming soon.” The iPhone is the permanent, lasting record of Steve Jobs having learned the lesson of Macintosh #1.

Besides MacPaint and MacWrite, Mac #1 arrived on the market with only the promise of new software, and nothing remotely resembling the developer tools necessary to remedy that little problem. It was a new paradigm, a new way to work, assuming your definition of “work” was printing “Happy Birthday” banners for your kids or defacing a black-and-white scan of the Mona Lisa. Other reviewers noted its word processor could save a document no more than 10 pages long to its single-sided floppy drive. For me, it was 4.

For those of us whose memories of the Altair 8800 were still fairly fresh, we clearly understood the promise and potential of this machine. We just knew how difficult it would be for consumers to buy into it. Prior to the airing of the classic Super Bowl ad, the clearest sign that Apple hadn’t figured out a solution to Macintosh’s missing software was in a 1983 multi-page print ad. Instead of pictures of the software to come, it showed some of the leaders of the software industry in red Macintosh polo shirts, including Lotus Development’s Mitch Kapor and Microsoft’s Bill Gates. Their faces represented the promise that software would some day surely come.

Up until yesterday, some of the most faithful Apple devotees I’ve ever known tell me they hold Steve Jobs personally responsible for withdrawing hardware expandability – the crown-jewel function of the Apple II – from the original Macintosh.

Folks don’t plunk down $2,495 for “some day” promises. Not until the Macintosh Plus in 1986 would the platform appear to the market as complete, and by that time Mac was already fending off competition in the 68000 CPU space from the Commodore Amiga and Atari ST.

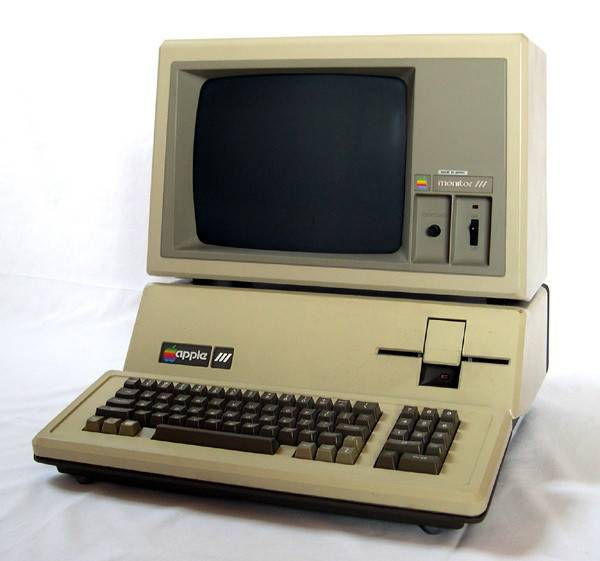

3. Apple III (1980)

In 1980, the success of a computer platform still depended on hundreds of independent boutique retailers all over the country, and a support network of thousands of self-employed consultants, educators, contributing editors, and users’ group leaders. But even at this early period in computing history, Tandy Corporation had already been demonstrating, through the Radio Shack TRS-80, that a brand and a platform were not synonymous. Tandy kept releasing new machines with the same brand (Color Computer, Model 100, Model 2000) but without the same software base. Apple promised not to make that same mistake.

It was 80-column text that separated the TRS-80 from the Apple II; sure, the II had color, but you didn’t always need to type a document in color. You didn’t see an Apple II in a business without an 80-column card installed. But many companies besides Apple produced 80-column cards, the most successful of which being (if you can believe this) Microsoft. To own the market in business computers and fend off a rumored invasion by IBM, Apple needed an 80-column machine.

The fact that the Apple III ran on an upgraded version of the II’s 6502 processor appeared, at first, to indicate that it would extend the existing platform. It didn’t exactly. To run Apple III native software, you needed Apple’s new operating system – which the company literally (not a joke) called SOS. You could run in Apple II mode, but since Apple word processors of the day expected 80-column cards, they didn’t always take well to the Apple III’s 80-column mode, which was often incompatible. What’s more, due to a certain relentless Apple executive’s insistence that Apple products should never need a fan, the Apple III circuit boards would overheat and the chips would pop out of their sockets (something I witnessed personally twice). The lesson learned here: No Apple product should ever hit the street until it’s been battle-tested under extreme conditions.

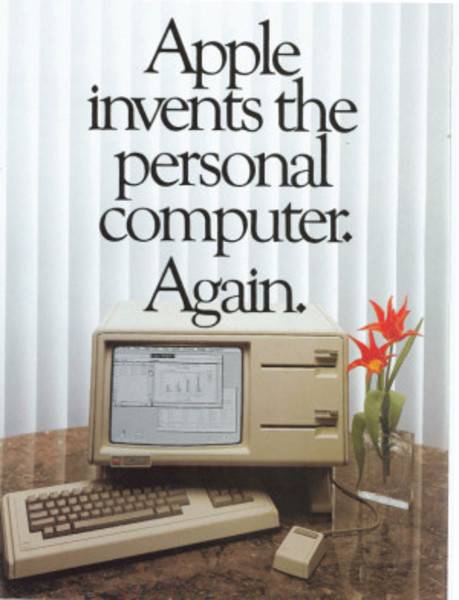

2. Lisa (1983)

I’ve talked about the lesson of never releasing a platform without the software to back it up. I’ve also alluded to the fact that people don’t plunk down four-digit investments (or, in Lisa’s case, five) for prototypes. Those lessons would be learned a year later; there was a simpler one that came first.

We had been reading about the promise of graphical computing in magazines like Creative Computing and Byte as early as 1976. By “we,” I mean those of us enthusiasts who had memorized the “memory maps,” as they were then called, of all the computers on the open market. So we were ready for the ideas that Xerox had put forth and let languish to be championed by Apple. That Apple would be the first to step up to the plate was no surprise.

But Lisa was too much of a stretch, even for smart folks. It was certainly graphical, and there was this mouse, but it was not yet intuitive like Macintosh. You had this desktop, where there was this source of “paper.” You tore off that paper, and it showed up blank. Then you did stuff to the paper, which required invoking a function by way of an application. You didn’t just launch LisaDraw or LisaWrite and get to work.

I was present in the room on the day Lisa was unveiled to six computer conferences nationwide. In fact, I actually unboxed the unit and plugged it in. After a while, I turned it on. There was no manual. There was a system disk, but the process of loading it wasn’t anything like a Disk II unit. So I guessed wrong several times. Spectators, who eventually numbered a few hundred or so, shouted out suggestions. After an hour or so, they started placing bets on the guesses they made for how these programs were supposed to work. This lasted into the night.

As amazing as it sounds in retrospect, whereas Steve Jobs would introduce the Mac and subsequent products to the world, the Apple III and the Lisa were delivered in wooden crates (like the one for the “leg lamp” in the movie “A Christmas Story”) to computer conferences to be unboxed by…well, by me and folks like me. At least the Lisa didn’t blow up on me. But the lesson here for Apple was obvious: Buyers are skeptical by nature. You can’t unveil a product to a room full of buyers and it to sell itself. People need to be shown.

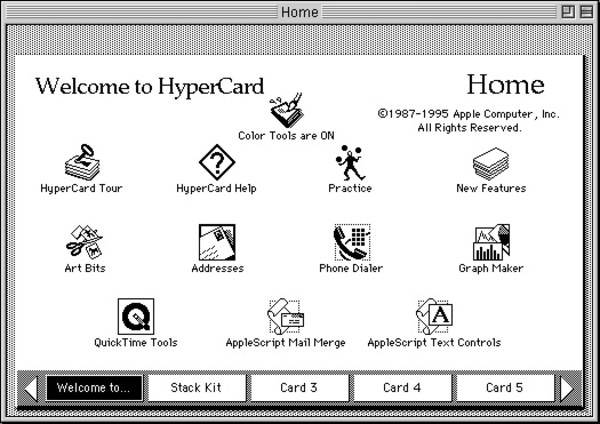

1. HyperCard

Today we rarely think of Apple as a software company. But in 1987, Apple developer Bill Atkinson led the production of a world changing piece of software that did indeed change the world, but not for Apple. Because the company (again, not under Jobs’ direction at this time) failed to recognize the full potential of HyperCard, it made a critical design decision that handicapped it. During its waning years, a completely independent developer recreated the HyperCard model without the handicap. And you’re using it now.

HyperCard was kind of like a database, kind of not. It utilized a forms-based model, which Apple started out calling “cards,” but which came to be called “pages.” Pages were designed using graphical tools, including a variety of input gadgets like text boxes, drop-down lists, and sliders. These controls could be bound to elements of data, and interacting with that data triggered events which could be responded to with little scripts of code. Pages were linked to one another by way of something called “hyperlinks.”

It was the world in Apple’s hands, and without Jobs, Apple let it slip through. I was an early tester of HyperCard, so I remember the design decision. Groups of cards that worked together were called “stacks.” There was talk about whether stacks should be made more interoperable – namely, by way of a naming convention where resources in stacks could address resources in other stacks, creating a type of interleaving or web-like structure. Evidently the fear was that an Apple competitor would take advantage of such a convention to leverage its own database platform, so the prospect went unexplored.

Three years later, a HyperCard veteran in the UK came up with a kind of naming convention for networked resources. It was a design concept so obvious that a physicist could produce it in his spare time. The lesson learned here – one which Steve Jobs would never let Apple forget after his comeback – was this: Never underestimate your own potential.