During my trip to Boston earlier this year, I had the opportunity to visit MIT. At the end of a long day of meetings with various MIT tech masterminds, I made my way to the funny shaped building (see photo right-below) where the World Wide Web Consortium (W3C) and its director Tim Berners-Lee work. Berners-Lee is of course the man who invented the World Wide Web 20 years ago.

This was my first meeting with the Web’s creator, whose work and philosophy was a direct inspiration for me when I launched ReadWriteWeb back in 2003.1

Editor’s note: This story is part of a series we call Redux, where we’ll re-publish some of our best posts of 2009. As we look back at the year – and ahead to what next year holds – we think these are the stories that deserve a second glance. It’s not just a best-of list, it’s also a collection of posts that examine the fundamental issues that continue to shape the Web. We hope you enjoy reading them again and we look forward to bringing you more Web products and trends analysis in 2010. Happy holidays from Team ReadWriteWeb!

After shaking hands, I told Tim Berners-Lee that this blog’s name was in part inspired by the first browser, which he developed, called “WorldWideWeb“. That was a read/write browser; meaning you could not only browse and read content, but create and edit content too. It was a shame then when Mosaic, a read-only browser, became the first mainstream Web browser in the mid-90s. It wasn’t until the rise of Web 2.0 that the read/write philosophy gained widespread acceptance.2 On that note, we launched into the interview…

Note: the interview was published in two parts, with Part 1 on the topic of Linked Data. Part 2 explored other topics and can be found here.

How Linked Data Relates to The Semantic Web

RWW: Earlier this year you gave an inspiring talk at TED about Linked Data. You described Linked Data as a sea change akin to the invention of the WWW itself – i.e. we’ve gone from a Web of documents to a web of data. Can you please explain though how Linked Data relates to the Semantic Web, is it a subset of it?

TBL: They fit in completely, in that the linked data actually uses a small slice of all the various technologies that people have put together and standardized for the Semantic Web.

Linked Data uses a small slice of the technologies that make up the Semantic Web.

We started off with the Semantic Web roadmap, which had lots of languages that we wanted to create. [However] the community as a whole got a bit distracted from the idea that actually the most important piece is the interoperability of the data. The fact that things are identified with URIs is the key thing.

The Semantic Web and Linked Data connect because when we’ve got this web of linked data, there are already lots of technologies which exist to do fancy things with it. But it’s time now to concentrate on getting the web of linked data out there.

Web inventor Tim Berners-Lee and ReadWriteWeb founder Richard MacManus

How Linked Data Has Evolved via Grassroots

RWW: Linked Data has had a lot of grassroots support, which you mentioned in your TED speech. This is something Semantic Web technologies, such as RDF, have struggled to get over the years. Has the W3C been pushing the more bottom-up Linked Data world, because of the frustration over lack of take-up of top-down Semantic Web?

TBL: A lot of the initial RDF and OWL projects came out of the academic world; and some of them were projects to show what you could do in a closed world. And the files were zipped up and left on a disc. While they were interesting projects, and while the systems were useful systems, the Semantic Web community maybe missed the point of the ‘web’ bit and focused too much on the ‘semantic’. However the work that’s been done in the Semantic Web, the standards, was really valuable. It’s relatively recently for example that SPARQL [an RDF query language] has been developed.

“It’s time now to concentrate on getting the web of linked data out there.”

Somebody drew an analogy the other day: can you imagine trying to promote a world of databases without SQL? Even though it’s not an interoperable protocol, it’s just a query language. So similarly, all that’s been put into RDF, rdfs and OWL is very valuable to the linked data community.

The Linked Data community tend to use a subset of that [Semantic Web technologies], of OWL for example. But they certainly use SPARQL. So you could argue that really it wasn’t ready to be deployed widely.

Linked Data started as a very informal Design Issues note that I put in; it was a grassroots movement from very early on. So yes W3C has been emphasizing the importance of Linked Data. It’s been the Semantic Web Interest Group of course, and various [other Semantic Web] activities, which has been pushing it. But also Linked Data has been seized on – a group of people for example put together DBpedia.3 That wasn’t commissioned, that was that they just thought it would be a really cool idea.

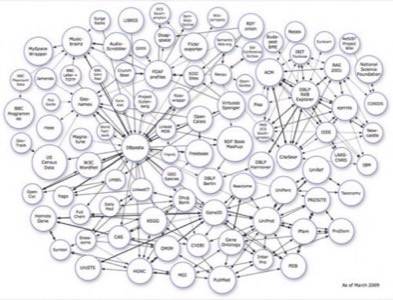

Graph of Linked Data sets on the Web, as at March 2009

Linked Data and Governments

RWW: In a recent Design Issues note, you urge governments to put their data online as Linked Data (although you’d also be happy for governments to just make available the raw data – presumably so that others can then structure it). What do you realistically expect, for example, the U.S. or U.K. governments to do over the next year? And in the near future, do you foresee different governments interconnecting their Linked Data sets?

TBL: One can’t generalize, governments are (like most big organizations) fascinatingly diverse inside them. So you’ll find that there are places inside governments where you get a champion who gets linked data and who’s just written a script and produced some linked data. So in the UK government for example, you’ll find there’s RDFa [in the code of its website] for civil service jobs. So if somebody wants to make a database of all the jobs, they can do that very easily.

“The first step of actually putting the data out there is the one that nobody else can do.”

There are other cases where the easiest thing for somebody to do is to just put data up in whatever form it’s available. Comma separated values (CSV) files are remarkably popular. They’re exported sometimes from spreadsheets. It’s remarkable how much information is in spreadsheets. Or sometimes pulled out of a database and then put up on the web. It’s not as good, not as useful to the community, as if Linked Data had been put up there and linked. But the first step of actually putting the data out there is the one that nobody else can do.

Data.gov, a catalog of public data, was launched in May by the U.S. government

The way to go is for government departments to go the extra step and convert [their data] into Linked Data. One of the nice things about Linked Data, when they have a pile of it, is that they could run a SPARQL server on it. SPARQL servers are a commodity product, a solution for all of the people who say ‘but actually I wanted to have XML.’ A SPARQL server will generate an XML file [and] allow somebody to write out, effectively, a URL for the XML file.

“Linked Data is the backplane, it’s the thing that you connect to in both directions.”

In fact, I don’t see why SPARQL servers shouldn’t provide CSV files, something which as far as I know isn’t in the standards. But I’d recommend it, certainly in government context, because CSV files are what people have and what people want.

So the message [for government] is to use RDF. Linked Data is the backplane, it’s the thing that you connect to in both directions. As a [web] producer your job is to make sure that you produce Linked Data one way or another. And as a consumer, there are lots of ways to consume that data once it’s out there as Linked Data.

In Part 2 of this interview we discussed: how previously reticent search engines like Google and Yahoo have begun to participate in the Semantic Web in 2009, user interfaces for browsing and using data, what Tim Berners-Lee thinks of new computational engine Wolfram Alpha, how e-commerce vendors are moving into the Linked Data world, and finally how the Internet of Things intersects with the Semantic Web. Read Part 2 here.

Footnotes:

1. The very first sentence written on this blog, on 20 April, 2003, was: “The World Wide Web in 2003 is beginning to fulfill the hopes that Tim Berners-Lee had for it over 10 years ago when he created it.”

2. For more on read/write browsers, you can read another early RWW post entitled What became of the Browser/Editor.

3. DBpedia is a community project to extract structured information from Wikipedia; see ReadWriteWeb’s profile of this and similar resources.