The Internet of Things (IoT) is touted by many as the next industrial revolution. The IoT has become increasingly mainstream in recent years, and leading analyst firms predict that the next decade of continuous IoT development will spur global revenue growth of more than $2-3 trillion. Industry experts also agree that the industrial sector has the most to gain from this technological revolution, and that the Industrial Internet of Things (IIoT) will likely drive the lion share of overall IoT revenue growth.

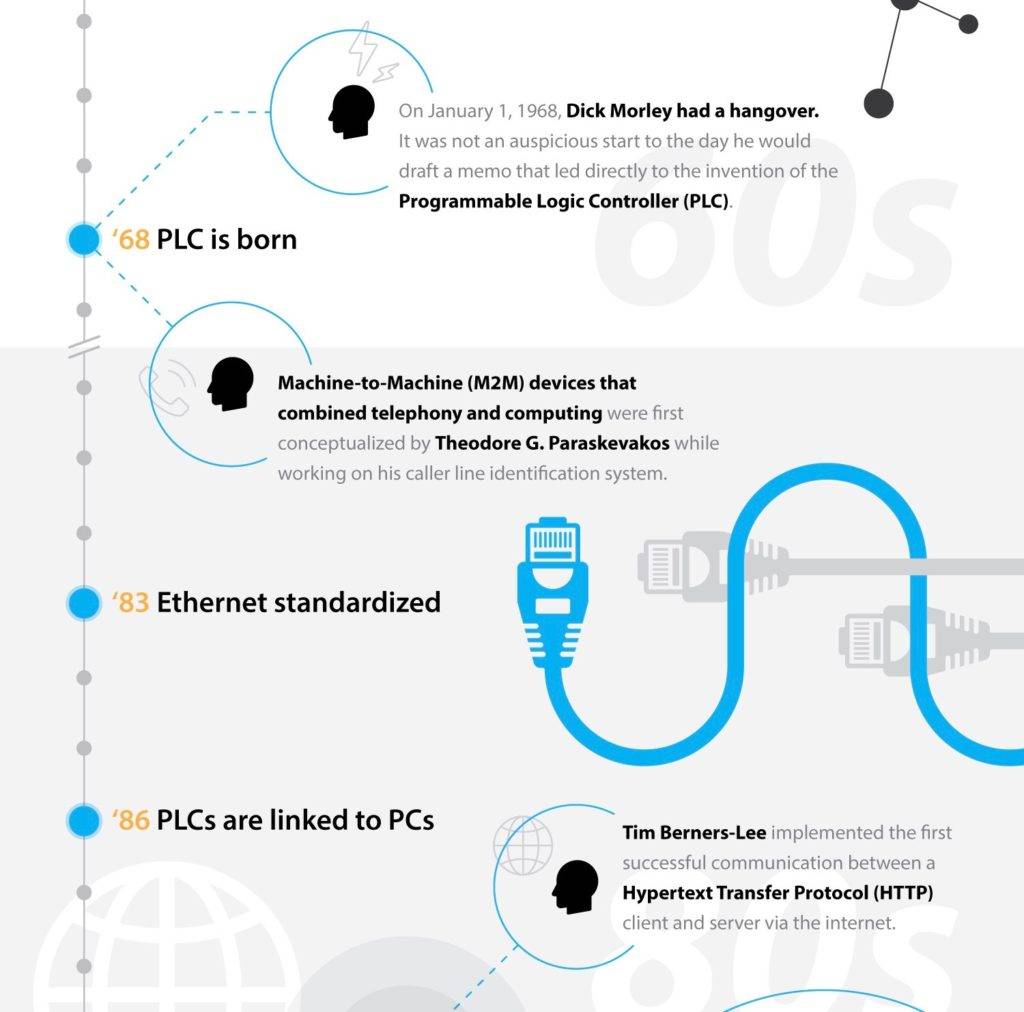

It has been a long road to today’s IIoT revolution, and while consumer innovation has played a large role, much of that road was paved with industrial sector innovation. As technology continues to evolve at an unprecedented pace, the future of IIoT is on the minds of investors and technology end users alike. But we can’t know where we’re going until we know where we’ve been, and to understand the future of the IIoT we must start way back in 1968.

Humble beginnings

Despite a hangover from the previous night’s New Year’s Eve festivities, engineer Richard (Dick) Morley drafted a memo on January 1, 1968 that ultimately led to the invention of the programmable logic controller (PLC). His creation, the Modicon, contributed greatly to General Motors’ manufacturing capabilities and significantly influenced the future of the automation industry.

Dick was not the only one busy in 1968. With hopes of creating an “apparatus for generating and transmitting digital information,” American inventor and businessman Theodore G. Paraskevakos was working on the world’s first machine to machine (M2M) devices. Morley’s PLC and Paraskevakos’s M2M were the first baby steps taken on the long road to today’s IIoT.

Laying the foundation for connectivity

Fast-forward to the 1980s and two critical IIoT milestones. The standardization of Ethernet connectivity in 1983 laid the groundwork to physically connect machines from different manufacturers. Six years later, Sir Tim Berners Lee, computer scientist at CERN, the European Organization for Nuclear Research, strengthened this multi-network management with the invention of a little thing called the World Wide Web. Lee conceived and developed the Web to meet the demand for automatic information-sharing between scientists in universities and institutes around the world.

While the “web” was still in its infancy, the industrial sector set its sights on interoperable connectivity on the plant floor. A group of vendors assembled to address growing concerns, referred to as the “Device Driver Problem.” This group included a half dozen companies, including Fisher-Rosemount, Intellution, and Rockwell Software, among others. This was the first meeting of what we know today as the OPC Foundation.

Connectivity, collaboration, and cooperation

When these industrial solution vendors first convened, their human machine interface (HMI) and supervisory control and data acquisition (SCADA) solutions were developed with proprietary communication protocols or driver libraries. As best-of-breed solutions emerged, and end user industrial operators began to build integrated architectures with solutions from multiple vendors, the need to enable communication across traditionally disparate machines became clear. Vendors had to either invest resources in developing application-level functionality or start creating more inclusive connections across solutions—including competitors’.

Some vendors decided to create their own Application Programming Interfaces (APIs) or Driver Toolkits. Although this solved their own connectivity issues, it limited how end users could integrate additional solutions. Luckily, the market soon persuaded the vendors to collaborate and make changes that were in the end users’ best interests.

The OPC Foundation forced many competing vendors to work together to solve connectivity problems perpetuated by proprietary communication protocols. The need for more interoperable solutions was further highlighted in 1995, as Microsoft Windows gained dominance of the plant floor. Windows 95 was the first Commercially Available off the Shelf (COTS) Operating System (OS) with plug-and-play capabilities to support easy integration with hardware, and it allowed users to interact with graphical units and controls similar to HMIs already being used in the factory. Windows 95/NT 4.0 were also more developer-friendly and cheaper than their Industrial Automation counterparts. As it became clear that Microsoft Windows was the ubiquitous OS to build around, all industrial software development began targeting Microsoft Windows as the platform of choice.

The late 1990s also included major advancements in wireless M2M technology. Ethernet, then a quarter of a century old, emerged as the universal connectivity standard in industrial settings. Interface standards began to differentiate by industry—the DNP and IEC 61850 that now dominate the Power industry; BACnet in Building Automation; and additional standards like Profibus, CC-Link, HART, and more. Consortiums for each of these standards began to form. The industrial sector was rapidly evolving towards the IIoT that we know today.

Taking IIoT to the masses

With a ubiquitous OS and Ethernet backbone in place, more and more industrial devices became connected. In 1999, Kevin Ashton, a British technology pioneer, continued the connectivity tone into the new millennium, coining the term “Internet of Things” to describe a system where the Internet is connected to the physical world via sensors. Connectivity was even made possible for legacy devices, a trend that would prove key in industrial settings, where equipment is expensive and considered a longer term investment.

Perhaps the most significant IIoT milestone of the early 2000s was the advent and widespread adoption of cloud technologies. The introduction of Amazon Web Services in 2002 brought the cloud to the masses and forever changed the way enterprise and industrial architectures were built and utilized. Fourteen years later, the cloud and virtual machines are still presenting new opportunities for the IIoT.

In the mid-2000s, as the consumer world acquired smartphones, the industrial world was getting smaller and more intelligent PLCs and Distributed Control Systems (DCSs). Hybrid controllers and Programmable Automation Controllers (PACs) emerged, and legacy hardware evolved as battery and solar power became more reliable and economical. Manufacturers could power sensors across a distributed architecture, like an oil pipeline, to empower intelligence and connectivity at the farthest reaches of an organization. The combination of widespread power sources and connectivity with smart devices began to add meaningful context to industrial data.

Data transforms into information

Context transformed data into information, and the industry turned again to the OPC Foundation to solve emerging challenges around communicating this contextual data. In 2006, the Foundation responded with OPC UA protocol that many rely on still today. The new OPC UA protocol was built on existing standards, but addressed the development of new technology and advancements. OPC UA decoupled the API from the wire and was designed to fit into Field Devices, Control Layer Applications, Manufacturing Execution Systems (MES), and Enterprise Resource Planning (ERP) applications. Its generic information model supported primitive data types (such as integers, floating point values, and strings), binary structures (such as timers, counters, and PIDs), and XML documents. To this day, OPC UA delivers an interoperability standard that provides data access from the shop-floor to the top-floor.

By 2010, machine and operational data began to yield real value, and more organizations sought to store and analyze their data over time. In response, the data historian market took off and sensor technology experienced significant price drops. This affordable and flexible intelligence and connectivity would bring many “brownfield” (pre-existing) industrial architectures into the IIoT age, as more and more legacy devices were bolstered with sensor intelligence and connectivity. Additionally, advances in personal computing and edge devices provided even more flexibility in organizations’ ability to access and analyze data from anywhere and at anytime. IT industry leaders, including Citrix and Intel, began openly discussing best practices for the growing bring your own device (BYOD) trend.

IIoT today, tomorrow, and beyond

Over the last six years, all the pieces have fallen into place to solidify a real and meaningful vision for the future of the IIoT. Robust industrial connectivity, advanced analytics, condition-based monitoring, predictive maintenance, machine learning, and augmented reality—these are the future of IIoT concepts, backed by viable technology that’s available today. Technology leaders—including GE, IBM, PTC, and many more—are betting on the future of the IIoT in a big way. Over the last two years, major investments in innovation and acquisitions have further refined these emerging IIoT platforms.

It has been a long road since Richard Morley’s hangover-resilient idea for the PLC, but as more attention and resources are dedicated to the advancement of the IIoT, even bigger things are expected across wider markets. According to a recent business intelligence report, nearly $6 trillion will be spent on IoT solutions over the next five years and businesses will be the top adopter of IoT solutions.

With so much at stake, there will undoubtedly be major shifts in the industrial world. As rules change and technology develops, roles will evolve and business structures will adjust. For example, traditionally disparate operations technology and IT divisions are starting to collaborate and even merge. We hear about these roles shifts from our customers, and the increasing use of traditional IT standards—such as MQTT, WAMP, and XMPP—within the industrial sector are further evidence of this transformation. And as integrated, accessible data becomes the norm, data scientists who can interpret that data are increasingly moving into decision-making executive leadership roles.

While it is difficult to predict exactly how the IIoT will evolve, it is clear that we are reaching a tipping point in this new industrial revolution. As more devices become connected and more data is created to feed into increasingly powerful analytics and artificial intelligence programs, there is seemingly no limit to the advances that can be made around the IIoT.