Can websites get visitors to prove they’re human by forcing them to make a moral choice? This is not a hypothetical question.

I wrote an article for ReadWriteWeb this summer about a way to replace those annoying CAPTCHAs with a miniature game. They are annoying because website operators put them in place to check for bots or spammers who are trying to gain access, perhaps to set up a bunch of accounts automatically.

Computer scientists at Carnegie Mellon University developed CAPTCHAs in 2000 and they have been popping up pretty much everywhere online ever since.

Many spammers use various methods to defeat them, including paying virtual slave wages to real people to input the values or through the use of special optical character recognition (OCR) software. As the bad guys get better at defeating them, they’ve sparked a continuing war of technology to differentiate the bad actors from the legitimate site visitors.

The CAPTCHA tests have had to get harder to read and parse out. That means they run the risk of keeping real visitors from the sites that are running the tests. So there’s been a full-on search for better ways to make the distinction than reading a few distorted letters.

In my earlier article, I mentioned Play Thru, which invites users to solve a game, such as figuring out what ingredients are used to make pancakes. One programmer wrote about a way around the Play Thru system last week.

The Moral Equivalent Of A Robot

But now we’re taking the concept to a new level – using complex moral problems to establish humanity. (The idea is not exactly new, as shown by this XKCD comic that attempts to play on arcane human knowledge.)

It makes a certain amount of sense, since CAPTCHAs are probably the best known of a class of problems called the Turing Test, which try to differentiate a human from a computerized source.

The tests are named after Alan Turing, a brilliant mathematician and code breaker who developed many of the original tenets of computer science well before we had actual computers, let alone ones that would fit in the palms of our hands. Turing played a key role in the decoding of the German codes during World War II and the development of early computational algorithms. The CAPTCHA is actually the reverse of the Turing test: it determines if an entity is a bot.

Beyond simple letter recognition, though, as CAPTCHAs become more complex, they take on the dimensions of the fictional Voight-Kampff Test – the question-and-answer field-test that cop Harrison Ford used in the movie Blade Runner to try to determine if the subject is man or machine. (The Voight-Kampff Test was also used some 10 years ago to determine if San Francisco mayoral candidates were actually human – one candidate actually recognized the test. He didn’t win.)

The Next Step For CAPTCHA

In this post on the Sophos Naked Security blog, there are some pretty funny examples of really difficult tests that most of us would have a hard time passing.

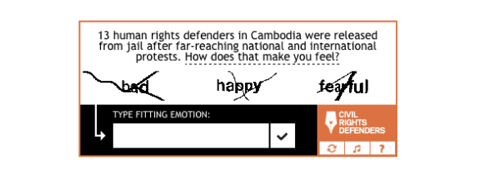

But last week there was another innovation, what is being labeled as “CAPTCHAs With A Conscience.” The idea, from the Swedish activist organization Civil Rights Defenders, is to pose a political question asking the viewer how a loaded question (prisoners being tortured, or gay-bashing) makes them feel?

That’s currently beyond the scope of most bots – but frankly it seems that the real goal here is political awareness, not bot protection. Of course, soon enough we will have computers that can correctly interpret human feelings, but it is an intriguing approach nonetheless.

How do you feel about this approach? And can you prove that you are really human when you reply?