On the 25th and 26th of October 2017 in Seoul, together with Arkadiusz Włodarczyk I had the opportunity to represent Samsung Poland R&D at the SOSCON 2017. The main goal of this annual conference is to promote open source solutions and show how you can use them to improve the world around us. At SOSCON 2017 we were presenting the Smart Sensor Array system. The idea behind our project was to show fast prototyping capabilities for business using the Samsung ARTIK 053 boards. We proved it to be true by creating a “do it yourself” sensor, monitoring and control system basing on the ARTIK 053 with Tizen RT on board, in conjunction with the open source Node-Red IoT management system. The brief description of our project for the conference stated:

The Smart Sensor Array is a system that can automatically detect Internet of Things sensor devices with web-based management and monitoring.

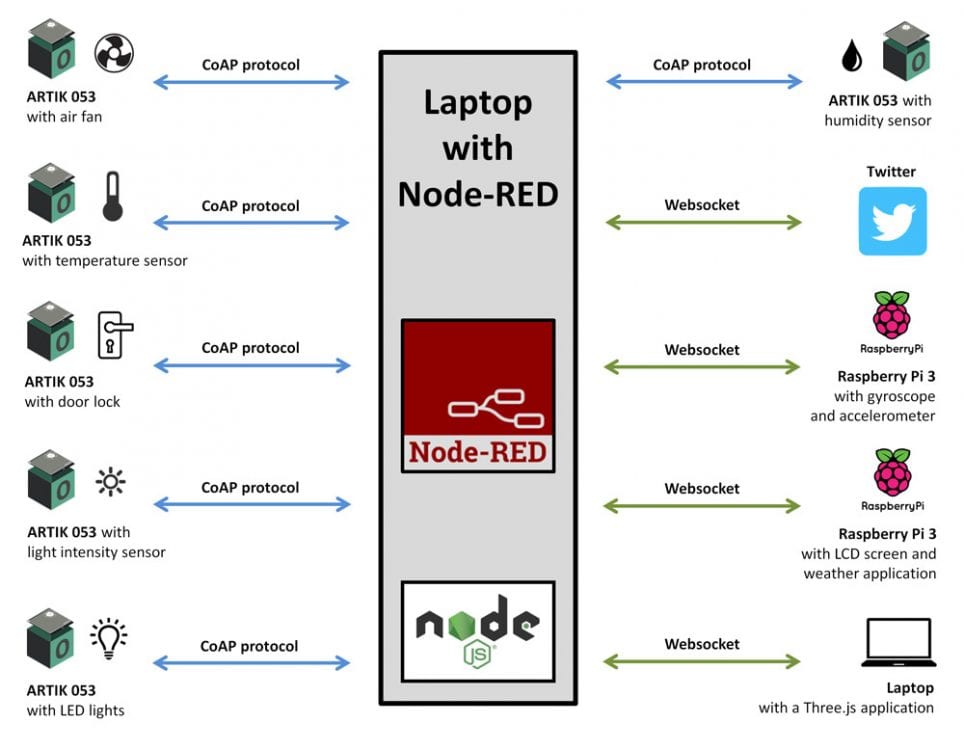

The hardware of our project consisted of six ARTIK 053 boards and two Raspberry Pi 3 boards. They were all connected to power banks for the easy movement of the devices. On top of the ARTIK 053 boards there were mounted the Grove Base Shield extensions in order to provide easy I2C plug connectivity support for sensors and relays. Our device setup is summed up in the picture below.

So how did the Smart Sensor Array work? First of all, we created data flows in Node-RED to determine how the data would circulate inside our IoT project. That meant creating input points and output points, filters and decision blocks. Node-RED is a node based data flow creation tool and its sole purpose is to help easily create IoT data flows in minutes. Therefore it is an ideal tool for fast prototyping. Our data scheme was simple. The computer with the Node-RED server aggregated the data from the three sensor devices based on ARTIK 053 boards with CoAP servers written in C and visualized the data with the gauges, charts and numerical data readouts on the Node-RED dashboard displayed on a 40 inch TV.

At the same time, via the dashboard, the user was able to manually control the other three devices using the onscreen sliders. So, for example by dragging a slider the user could change the lightness of the LED lights and the speed of the fan. Lastly, by clicking a button it was possible to open the electric door lock. Also Node-RED was connected to Open Weather Maps and updated the weather application data constantly with the current weather condition data in Seoul – Jamsil district. As for the 3D application it received data both from the Raspberry Pi 3 with the connected gyroscope – accelerometer sensor mix as well as text data from Node-RED and displayed it finally in the application as a 3D text behind the onscreen 3D model of the device.

What is worth mentioning is that our system could publish information about the state of its devices to Twitter under the #smartsensorarray hashtag. We could tweet for example the current air temperature, air humidity or the speed of the fan. But what is most interesting the users could also control the Smart Sensor Array lights and fan speed with their own phones. They just needed to post on the #smartsensorarray hashtag special commands to change the fan speed and light intensity of the LED lights.

We had quite a lot of visitors at our exhibition. We were visited by professionals, students, high school pupils and by developers from different companies and countries. They asked a wide variety of questions. But mostly we were asked about the hardware – ARTIK 053 specs and its capabilities, software and frameworks we had used to create the Smart Sensor Array System. The visitors also played around with the Smart Sensor Array by changing the sliders on the dashboard and tweeting commands to the system. We also gave them the opportunity to live code our system using Node-RED. They have enjoyed our fast prototyping environment. Everyone seemed to appreciate our effort and wished us good luck in our future Internet of Things projects.

As for the conference itself, SOSCON was full of great projects and exhibitions. For example, next to our stand Samsung R&D Moscow presented a Passport Validation System based on OpenCV ultraviolet pattern detection. Their scanning device was packed inside a nicely carved, stylish wooden box. But there were also other companies present like Mand.ro which showed self 3D printed and automated prosthetic arms for amputees and path search robots.

An interesting project was made and exhibited by an individual – a talented high school student who created a big drone. The drone was named WAG. This shortcut stands for water, air and ground. And yes, that drone can operate in all of those environments and switch between them instantaneously. The drone was a remarkable, outstanding work done there by the young constructor.

Another interesting booth was from the Fuse company. Their system lets you create and deploy beautiful animated cross-platform applications. The authors of Fuse state on their website that – “Fuse is a real-time development environment where the app can be modified while it is running side by side on multiple platforms”. And yes, I checked myself, they plugged in my private devices to their system and the real-time, cross-platform development really works.

Two more booths which I had visited were the Tizen RT Things SDK booth and Tizen .NET booth. The first one presented new tools for fast and easy Tizen RT programming and deployment, the second one was focused on creating Tizen applications using C# and the .NET environment.

The last exhibition I managed to visit was a labyrinth game. Using the Samsung Gear S3 smartwatch the visitors could take part in a tournament of passing virtual mazes full of traps, quests, items and bonuses. And yes, as you might have guessed the grand prize for the tournament winner was a Samsung Gear S3 smartwatch.

All in all, in my opinion, SOSCON 2017 was a very good conference packed with a lot of interesting content, great exhibitions and talented people. Definitely worth participating in for all those who are interested or working in the new technologies sector.

This is a guest post by Bartłomiej Bartel

Most Popular Gambling Stories

- Marves Fairley pleads guilty as NBA betting probe widens

- Guam bingo operators sentenced over multimillion-dollar charity gambling fraud targeting children

- Kalshi fires back at FairPredicts with cease-and-desist over ‘Kalshi Lies’ campaign

- Fertitta Entertainment strikes multibillion dollar deal to acquire Caesars operations

- Rhode Island Senate advances bill expanding sports betting competition

Latest News

Betting

Judge clears Brendan Sorsby to return to Texas Tech after NCAA gambling-related ban

Texas Tech quarterback Brendan Sorsby can rejoin the Red Raiders after a Lubbock County judge temporarily blocked the NCAA from enforcing a ruling that declared him permanently ineligible because of...