A recent study reports that US broadband households own more than 10 connected devices. What will happen when they start talking and working together? Will your home become aware? Finding out how all these connected devices will work together is still an unknown — and a top priority. The industry is focused on improving and realizing the full potential of interoperability, asking for your home to go from a smart home to an aware home.

The Smart Home — The Aware Home

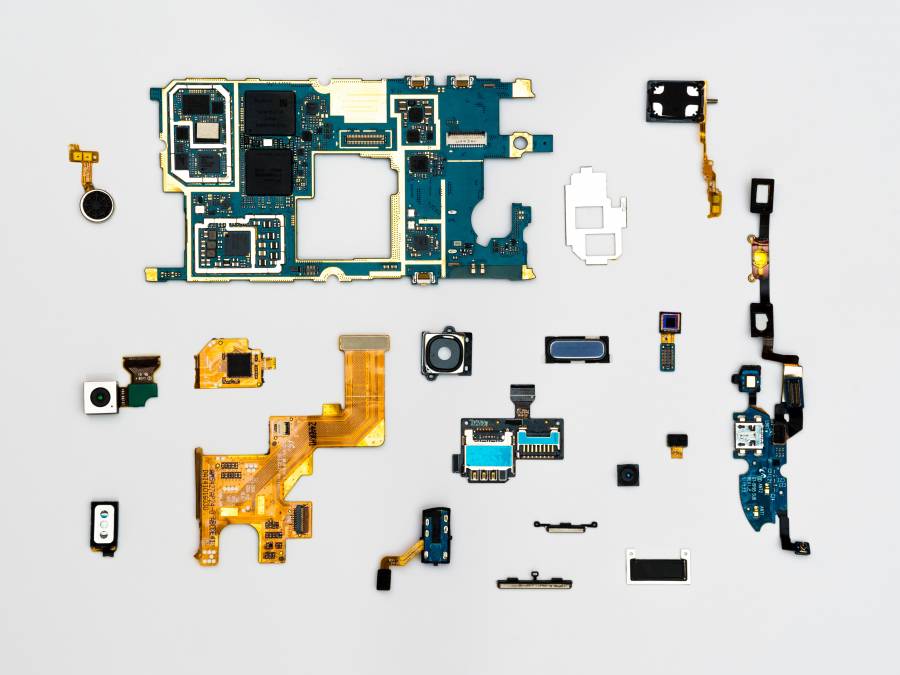

There’s emergent technology development that will enable tomorrow’s smart homes to function as single, integrated systems, not a collection of intelligence devices that may communicate with one another, but otherwise, operate independently.

We’ve seen some of this transformation happen already in the industrial space, as complex production and distribution systems are using smart technology to monitor overall operations (instead of relying on functions to operate individually). Businesses have traditionally focused on such “systems level” solutions, and their concerns about reliability and security have encouraged a focus on integrity as well as integration.

Such functionality is less apparent when it comes to the consumer: A study from McKinsey sees an annual compound growth rate of 31% in the number of connected homes since 2015 but notes that the lack of integration (fragmented technology) as the next hurdle to overcome.

All smart home items working together.

I think it’s only a matter of time before our homes become similarly enabled to what we see in the industry – and more — and it won’t be because consumers buy complex solutions; instead, things will simply work better on their own, and work together better, too. Key to enabling this are Edge Computing, and integration, known as “Swarm Computing.”

Swarm Computing, Edge Computing, Edge (like the edge of the Cloud) which is items not exactly secure “in” the cloud, just on the edge. Then there is fog.

Edge Computing is most recognized for the role it plays reducing latency issues, as well as protecting our privacy and security. But Edge Computing is also the key to enabling interoperability – or an Aware Home — because it allows smart devices to communicate and allocate computing resources locally. Then the items in your home are not necessarily dependent on the cloud — and thereby share insights. All the yield from device actions will be faster and more securely than they are today.

The overall system learns and acts by working together.

Such a home would serve as an active collaborator in managing technologies, not just host to their independent functions. The benefits could be immense. For instance:

- Home security could evolve from blunt use of movement and secret codes to using information from other devices to recognize you or others; it might provide an added filter to what those 10 devices in your home choose to share with the world.

- It could monitor the state of seniors (not just position) and notify caretakers if attention was needed; similarly, timers could interact with the interior, and exterior content to — say — inform you if your workday morning routine was running behind schedule.

- An aware home would enable more natural and nuanced interaction with devices, which will be able to use facial and voice recognition to not just receive commands but register their urgency and other emotional attributes, as well as react to the context (e.g., Providing a more sensitive response to “where are my car keys” if other sensors in your house report that you’ve spent 20 minutes looking for them).

Think human body versus a collection of smart limbs: your fingers sense objects and push buttons.

Your fingers don’t consider music preferences or calculate budgets; similarly, your stomach might send a signal that it wants to be fed, but your mind steps in and belays that order. So, all of this will be taken care of for you without you having to think about it.

Microsoft, Amazon, Google, and others in the industry are continually looking to build more intelligence and connectivity.

It these companies can put all of this into the processors they use, they can run neural networks and classical machine learning (“ML”) algorithms — such as Support Vector Machine — (SVM). These are discriminative classifiers are formally defined by a separating hyperplane. They are given labeled training data called, random forest, decision trees. These processing advancements are driving progress in secure facial recognition, voice control, and immersive audio capabilities in smart home devices.

What’s more intriguing is that SDKs under development allow the capabilities used in one vertical industry to be applied to another so, for instance, low-power consumption from mobile payment solutions can be used in other devices that run on batteries, automotive processing speeds can be utilized in homes, and benchmark silicon-based security can benefit any application.

Again, the human body analogy is useful: Just like you’re “aware” of the world around you, you use many different senses that involve various levels of your conscious attention, depending on circumstance. Imagine if the connected devices in your home interacted similarly, which is what they often do in industrial settings.

The future in which you interact with your aware home like Star Trek’s Jean Luc Picard did with his spaceship’s computer may or may not arrive.

Instead, the aware home may well rely on reflexes and proprioception far more often than it does on a central processor (or connection) to gain insights and make decisions. It’s part of a significant paradigm shift we’re going to see in how data are used: If the last decade was characterized by the collection of data in massive databases, the next one would be the decade of relevant data that are processed in powerful, smart, connected edge devices that only share data that are necessary to operate.

I think tomorrow’s aware home will talk to itself far more than it talks to anyone or anything else, and its actions will be embedded in your everyday experience.