This

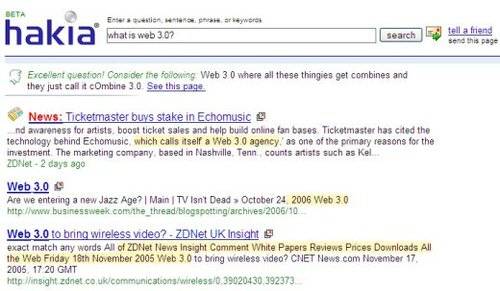

week I spoke to Hakia founder and CEO Dr. Riza C. Berkan and COO Melek

Pulatkonak. Hakia is one of the more promising Alt Search Engines

around, with a focus on natural

language processing methods to try and deliver ‘meaningful’ search results.

Alex Iskold profiled

Hakia for R/WW at the beginning of December and he concluded, after a number

of search experiments, that Hakia was intriguing – but it was not a level to compete

with Google yet. It is important to note that Hakia is a relatively early

beta product and is still in development. But given the speed of Internet time,

3.5 months is probably a good time to check back and see how Hakia is

progressing…

What is Hakia?

Riza and Melek

firstly told me what makes Hakia different from Google. Hakia attempts to

analyze the concept of a search query, in particular by doing sentence

analysis. Most other major search engines, including Google, analyze keywords.

Riza and Melek told me that the future of search engines will go beyond keyword

analysis – search engines will talk back to you and in effect become your search

assistant.

One point worth noting here is that, currently, Hakia still has some human

post-editing going on – so it isn’t 100% computer powered at this point.

Hakia has two main technologies:

1) QDEX Infrastructure (which stands for Query Detection and

Extraction) – this does the heavy lifting of analyzing search queries at a

sentence level.

2) SemanticRank Algorithm – this is essentially the science they use, made up

of ontological semantics that relate concepts to each other.

If you’re interested in the tech aspects, also check out hakia-Lab

– which features their latest technology R&D.

How is Hakia different from Ask.com?

Hakia most reminds me of Ask.com, which uses more a natural language approach

than the other big search engines (‘ask’ a question, get an answer) – and also

Ask.com uses human editing too, as with Hakia. [I interviewed

Ask.com back in November]. So I asked Riza and Melek what is

the difference between Hakia and Ask.com?

Riza told me that Ask.com is an indexing search engine and it has no semantic

analysis. Going one step below, he says to look at the basis of their results.

Ask.com bolds keywords (i.e. it works at a keywords level), whereas Riza said

that Hakia understands the sentence. He also said that Ask.com categories are

not meaning-based – they are “canned or prefixed”. Hakia, he said,

understands the semantic relationships.

Hakia vs Google

I next referred Riza and Melek to Read/WriteWeb’s

interview with Matt Cutts of Google, in which Matt told me that Google is

essentially already using semantic technologies, because the sheer amount of

data that Google has “really does help us understand the meanings of words

and synonyms”. Riza’s view on that is that Google works with popularity

algorithms and so it can “never have enough statistical material to handle

the Long Tail”. He says a search engine has to understand the language, in

order to properly serve the Long Tail.

Moreover, Hakia’s view is that the vastness of data that Google has doesn’t

solve the semantic problem – Riza and Melek think there needs to be that semantic

connection present.

Their bigger claim though is that the big search companies are still thinking

within an indexing framework (personalization etc). Hakia thinks that

indexing has plateaued and that semantic technologies will take over for the next

generation of search. They say that semantic technologies allow you to analyze

content, which they think is ‘outside the box’ of what the big search companies

are doing. Riza admitted that it was possible Google was investigating semantic

technologies, behind closed doors. Nevertheless, he was adamant that the future

is understanding info, not merely finding it – which he said is a very

difficult problem to solve, but it’s Hakia’s mission.

Semantic web and Tim Berners-Lee

Throughout the interview, I noticed the word “semantic” was

being used a lot – but their interpretation seemed to be different to that of Tim

Berners-Lee, whose notion of a Semantic Web is generally what Web people think

about when uttering the ‘S’ word. Riza confirmed that their concept of semantic

technology is indeed different. He said that Tim Berners-Lee is banking on

certain standards being accepted by web authors and writers – which Riza said is

“such a big assumption to start this technology”. He said that it

forces people to be linguists, which is not a common skill.

Furthermore, Riza told me that Berners-Lee’s Semantic Web is about

“imposing a structure that assumes people will obey [and] follow”. He

said that the “entire Semantic Web concept relies on utilizing semantic

tagging, or labeling, which requires people to know it.” Hakia, he said,

doesn’t depend on such structures. Hakia is all about analyzing the normal

language of people – so a web author “doesn’t need to mess with

that”.

Competitors

Apart from Google and the other big ‘indexing’ search engines, Hakia is

competing against other semantic search engines like Powerset and

hybrids like Wikia. Perhaps also Freebase – although Riza thinks the latter may be “old semantic

web” (but he says there’s not enough information about it to say for sure).

Conclusion

Hakia plans to launch its version 1.0 (i.e. get out of beta) by the end of

2007. As of now my assessment is the same as Alex’s was in December – it’s a

very promising, but as yet largely unproven, technology.

I also suspect that Google is much more advanced in search technology than

Mountain View is letting on. We

know that Google’s scale is a huge advantage, but their experiments with things

like personalization and structured data (Google Base) show me that Google is

also well aware of the need to implement next-generation search technologies.

Also, as Riza noted during the interview, who knows what Google is doing behind

closed doors.

Will semantic technologies and ‘sentence analysis’ be the next wave of

search? It seems very plausible. So with a bit more development, Hakia could

well become compelling to a mass market. Therefore how and when Google responds

to Hakia will be something to watch carefully.