For a while now I have been wanting to write an essay or even a book with the title, “The Last of Our Kind,” looking ahead to a time when machines become more intelligent than humans and/or humans incorporate so much digital technology that they become post-biological creatures, indistinguishable from machines. Those digitally-augmented descendants will be so different from us as to seem like an entirely different species. What happens to us? Simple “biologicals” might be able to co-exist for a time with our more intelligent descendants, but not for long. Eventually, creatures that we today consider “humans” – creatures like us – will go extinct.

At the heart of this line of thinking is the notion that what matters most is intelligence, not biology. What are we humans? At the end of the day we are nothing more than biological containers for intelligence. And frankly, as containers go, biological ones are not ideal. We’re frail, and superstitious. We don’t live very long. We need to eat and sleep. We learn slowly. Each new generation spends years re-learning all the stuff that previous generations have already learned. Some of us get sick, and others then devote enormous resources to caring for the sick ones. It’s all incredibly slow and crude. Progress takes forever.

So maybe we are just a stopping point. Maybe the whole point of biological creatures is to evolve into something that generates just enough intelligence to evolve into something else. Maybe our purpose is to create our own replacements. And maybe, thanks to computers and artificial intelligence, we are not far from reaching this huge evolutionary inflection point.

What Is To Be Done?

Others are thinking along these lines, and even trying to do something about it, as evidenced this terrific essay from the New York Times by Huw Price, a philosopher at University of Cambridge.

It’s a long piece but I’ve grabbed some highlights:

“I do think that there are strong reasons to think that we humans are nearing one of the most significant moments in our entire history: the point at which intelligence escapes the constraints of biology. And I see no compelling grounds for confidence that if that does happen, we will survive the transition in reasonable shape. Without such grounds, I think we have cause for concern.

“We face the prospect that designed nonbiological technologies, operating under entirely different constraints in many respects, may soon do the kinds of things that our brain does, but very much faster, and very much better, in whatever dimensions of improvement may turn out to be available.”

Price and many others believe we are nearing the point at which artificial general intelligence is achieved. But this raises profound existential questions for us:

“Indeed, it’s not really clear who “we” would be, in those circumstances. Would we be humans surviving (or not) in an environment in which superior machine intelligences had taken the reins, to speak? Would we be human intelligences somehow extended by nonbiological means? Would we be in some sense entirely posthuman (though thinking of ourselves perhaps as descendants of humans)?”

The argument that some people put forward is that machines will never be able to do everything a human can do. They’ll never be able to write poetry, or have dreams, or feel sorrow or joy or love. But as Price points out, who cares?

“Don’t think about what intelligence is, think about what it does. Putting it rather crudely, the distinctive thing about our peak in the present biological landscape is that we tend to be much better at controlling our environment than any other species. In these terms, the question is then whether machines might at some point do an even better job (perhaps a vastly better job).”

Of course machines will do a vastly better job at many things than we humans can do. Machines are already doing that in countless domains. Chess is one example. Stock market trading is another. Imagine what would happen to the world’s markets, and thus to the world’s economy, if tomorrow all the computers were shut off and we went back to doing it by hand. Imagine humans trying to compete side by side in this domain against machine. It’s unfathomable.

Trying To Stop The Unstoppable

Price and others are trying to come up with ways to keep this from happening, or to make sure that “good” outcomes are more likely than “bad” outcomes. Toward that end Price has co-founded the Centre for the Study of Existential Risk (C.S.E.R.) at Cambridge.

I think assigning values like “good” and “bad” to the various possible outcomes of an evolutionary process makes no sense. Evolution happens and we don’t have control over it. Whatever rules some well-wishers might put into place to prevent certain outcomes, others will find ways to work around them. It’s what Yale computer scientist David Gelernter calls “the Orwell Law of the Future,” and it goes like this: Any new technology that can be tried will be.

We are on a path, and there is no stopping it. This is neither good nor bad, it just is. Was it bad when single-celled organisms evolved into more complex organisms and then got eaten by them? I suppose the single-celled organisms weren’t psyched about it. But without that process, we humans wouldn’t be here. And if now it is our turn to be erased by evolution, so what? From the perspective of the universe, who cares if humans cease to exist?

The great irony in all this is that we can’t stop pushing forward with dangerous technologies (AI, bioengineering) because evolution has hard-wired our brains in such a way that we cannot resist pushing forward, even if the consequence of this ever-upward march of evolution is that we end up rendering ourselves extinct. We humans like to believe that we among all living creatures are special and unique. And we are, if only because we are the first species that will knowingly create something superior to ourselves. We will engineer our own replacements. Which when you think about it is both brilliant and phenomenally stupid at the same time. In other words, perfectly human.

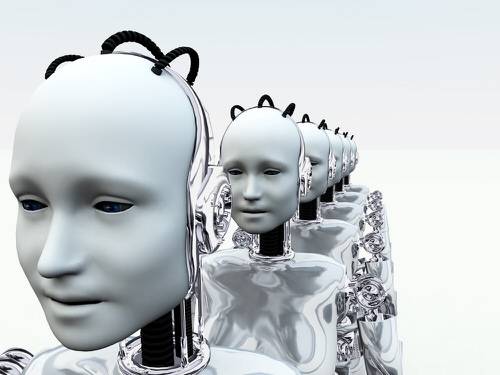

Image courtesy of Shutterstock.