Microsoft has showed off research that takes us significantly closer to a Star Trek-style universal translator: natural language translation, in real time, in the user’s own voice.

The demonstration by Microsoft chief research officer Rick Rashid (see embedded video below) at Microsoft Research Asia’s 21st Century Computing event was part of a speech to about 2,000 students in China on October. 25, and doesn’t actually represent a product in the works. “This work is in the pure research stages to push the boundaries of what’s currently possible,” a Microsoft spokeswoman said in an email.

The potential value of such capability is enormous, and obvious. On Star Trek, the universal translator made alien relations possible. For business travelers and tourists, speaking even a few words of the native tongue, let alone fluently, can make a big difference. For immigrants, learning the language of their new country is often the biggest barrier to assimilation. That’s why Microsoft – and competitors like Google, among others – have worked for years to develop real-time translation systems.

Rashid’s demonstration shows a real-time speech-to-text translation engine, with a similarly real-time assessment of its accuracy. (Microsoft didn’t say how it generated the accuracy measurement.) According to Rashid, however, the accuracy has been improved by more than 30% compared to previous generations, with a current error rate as little as one in seven or eight words, or 13% to 14%. (Disclosing the error rate is significant, as competitors like Nuance usually compare recognition rates against their own products.)

Translation Needs Big Data

Microsoft is no stranger to automated translation; on Halloween, the company announced that it would be working with researchers in Central America to buid a version of the Microsoft Translator Hub to preserve the Mayan language. The Hub lets users create a model, add language data, then use Microsoft’s Windows Azure cloud service to power the automated translation. The idea, as Microsoft took pains to explain, was to preserve the dying language through the next b’ak’tun, the calendar cycle that ends this December, prompting waves of end-of-the-world predictions, including movies like 2012.

As Microsoft’s Translator Hub suggests, translation is predicated upon big data. The calculations are immensely complicated, not just dealing with the phonemes that make up each word, but also working out how thoughts are organized into proper grammar, as well as other elements like the genders of certain nouns, honorifics, and other cultural nuances. Microsoft built in speech-to-text tools inside of Windows XP, as Rashid points out, but the technology suffered arbitrary speech errors of about one in every four words. Although speech-to-text (and text-to-speech) has remained inside of Microsoft’s software as an accessibility tool, it hasn’t yet served as a general replacement for the keyboard – even, as Scott Forstall’s departure from Apple demonstrates – with some of the top minds in the industry powering technologies like Apple’s Siri.

Typically, machine translation is improved through training, as the software learns how a user pronounces various phonemes and generally becomes familiar with how the user says individual words.

His Master’s Voice

Rashid’s demonstration went a step farther, however. The software not only learned what Rashid was saying, but also parsed the meaning, reorganizing it into Chinese. It also took his voice and recast the Chinese phonology in Rashid’s natural voice. How? By using a few hours speech of a native Chinese speaker and properties of Rashid’s own voice taken from about one hour of pre-recorded English data – recordings of previous speeches he had made.

Real-time voice translation isn’t exactly new. In the mobile space. Both Microsoft and Google, for example, have released apps that can translate text that a smartphone camera sees. And both offer “conversational modes” that are actually more akin to a CB radio: one person talks, taps “stop,” the phone translates and plays back a recorded voice, the other person speaks, and so on. What Rashid’s demonstration showed off was a much more conversational, continuous, natural means of translation.

And as Rashid’s blog post and the video highlight, the crowd applauded nearly every line. That’s the type of response every business traveler and tourist wouldn’t mind when trying to make herself understood.

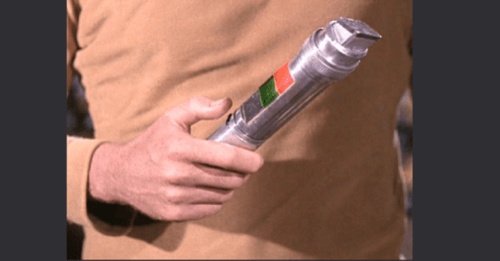

Lead image from Memory Alpha.