Robots have become commonplace in many aspects of life including health care, military and security work. Yet until recently little thought has been given outside of academic circles to the ethics of robots.

Silicon Valley Robotics recently launched a Good Robot Design Council — which has launched “5 Laws of Robotics” guidelines for roboticists and academics — on the ethical creation, marketing and use of robots in everyday life. The laws state:

- Robots should not be designed as weapons.

- Robots should comply with existing law, including privacy.

- Robots are products; they should be safe, reliable and not misrepresent their capabilities.

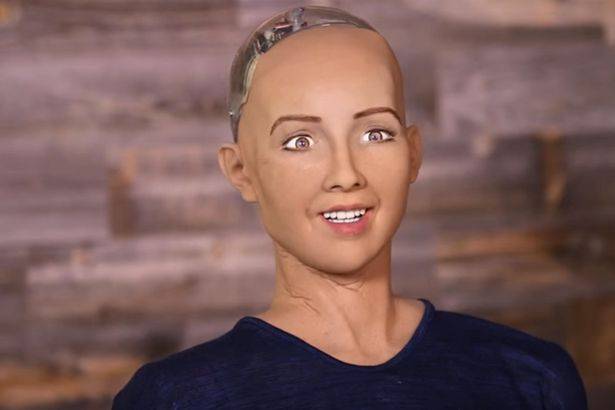

- Robots are manufactured artifacts; the illusion of emotions and agency should not be used to exploit vulnerable users.

- It should be possible to find out who is responsible for any robot.

The laws have been adapted from the EPSRC 2010 “Principles of Robotics”. In Britain a few months ago we saw a similar document, “BS8611 Robots and robotic devices” by the British Standards Institute (BSI) presented at the Social Robotics and AI conference in Oxford as an approach to embedding ethical risk assessment in robots. It was written by a committee of scientists, academics, ethicists, philosophers and users and intended for use by robot and robotics device designers and managers to help people identify and avoid areas of potential ethical harm. Like the US laws, it contains sentiments underpinned by Isaac Asimov’s ‘Three Laws of Robotics,” and it asserts:

“Robots should not be designed solely or primarily to kill or harm humans” and also that“humans, not robots, are the responsible agents; it should be possible to find out who is responsible for any robot and its behavior.”

Dan Palmer Head of Manufacturing at BSI said:

“Using robots and automation techniques to make processes more efficient, flexible and adaptable is an essential part of manufacturing growth. For this to be acceptable, it is essential tha t ethical issues and hazards such as dehumanization of humans or over-dependence on robots, are identified and addressed. This new guidance on how to deal with various robot applications will help designers and users of robots and autonomous systems to establish this new area of work.”

Whilst the standard builds on existing safety requirements for different types of robots, covering industrial, personal care and medical, it also recognizes that there are potential ethical hazards from the integration of robots and autonomous systems in everyday life, particularly when robots are in a care or companionship role such as with children or the elderly.

Robot design and emotional connections

For a while, you could buy costumes for iRobot Roomba vacuum cleaners. The PARO Therapeutic Robot is used in nursing homes around the world as a companion for the elderly with great success. But what happens when the relationship ends? One need only look at the grieving that occurred when Sony’s AIBO robotic dog — released in 1999 — was discontinued in 2006 with the announcement repairs and spare parts would be discontinued in 2014. For many owners, it was like watching a pet slowly die. There were even Buddhist funerals available for AIBO dogs in Japan. The reality is, when people are associating robots with pets or humans, they are attaching an emotional connection to them.

Robots for love and maybe more?

The idea of robot lovers is not a far-fetched as it might sound. Films such as Her and Ex Machina and the television series Humans present a future where humans fall in love and/or want to have physical relationships with AI and robots. However, there are currently no widely available sex robots in creation. The closest correlation seems to be incredibly lifelike sex dolls including extremely disturbing ones featuring children).

Sex business entrepreneur Bradley Charvet has revealed his plans to open a sex robot café in London, where visitors will be able to receive “cyber-fellatio” while drinking tea. “Sex with a robot will always be pleasing and they could even become better at techniques because they would be programmable to a person’s need. It’s totally normal to see a new way of using robots and others sex toys to have pleasure.”

The issue of robots and human attachments at such a level causes discomfort to many. The second annual Love and Sex with Robots academic conference was supposed to be held in Malaysia in November 2015. But in October, the Inspector-General of Police declared the conference illegal, highlighting the country’s conservative morals and likening robots to deviant culture, and it had to be canceled abruptly. The next conference is in London in December. There’s even an organization “Campaign against sex robots” that predicts their existence is not that far away. They believe that:

“The development of sex robots will further reduce human empathy that can only be developed by an experience of mutual relationship. We challenge the view that the development of adult and child sex robots will have a positive benefit to society, but instead further reinforce power relations of inequality and violence.”

Even forensic researchers into the treatment of sexual deviance claim that:

“It’s very important to understand this because we need to do more to prevent child sexual abuse and exploitation. And it’s only a matter of time before dolls are souped up with artificial intelligence. How lifelike can they get? Will more realistic technologies help reduce the problem, or make it worse? We need to start figuring out what the impact will be. The cost if we don’t explore it is intolerably high.”

What about robots and physical violence?

While robots aren’t sentient androids (yet), it’s worth remembering that robots are currently committing acts of violence. Drones, essentially flying robots, are utilized to strike in armed, unmanned combat in civilian and war zones. Then in the US we’ve recently seen a scenario where a robot was used to detonate a bombin response to a police killing, ultimately leading to the death of Micah Johnson who killed five police officers and wounded seven others in Dallas. This action sets a new precedent in how these robotic devices are being used at home. As Ryan Matthew Pierson writes:

“This is the first known case of a United States police department using a remotely positioned explosive to kill a suspect. Where gunfire and other means of lethal force are unfortunately common practice by police in the United States, using a robot to administer that force is new.”

If military and police are excluded from the “do no harm” pledges of the Good Robot Design Council and BSI, what kind of precedent is established? What role does defense play in robot ethics with the creation of robots designed to kill during police or military operations? Will ethics be able to keep up with the technological advances and indeed growing uses of robots? Only time will tell.