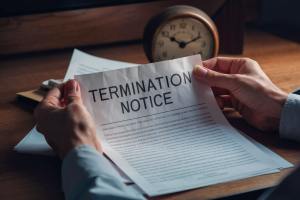

Latest News

Major games studio abruptly shuts down, blaming leaks to video game journalist

A studio founded by the creator of State of Decay has closed, with its founder blaming leaks to the gaming press as the reason for its shuttering. Jeff Strain, who started Possibility Space in 2021, told all staff last week that the studio was shutting down immediately. That is per an email obtained by Polygon…