Using a cloud computing service may sound enticing, but you better consider how that data can be moved around if you want to switch to a different provider.

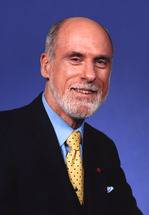

It’s a big problem that now has the attention of Vint Cerf, who is calling for standards to define how customer data gets passed between different cloud service providers.

Cerf, Google’s chief Internet evangelist, is one of those legends of the tech world, up there with people like Steve Wozniak. He is one of the co-designers of the TC/IP protocol. He is one of those few who had this idea way back when of hooking computers together to create a network. Today we call that network the Internet.

So you listen when Cerf gets up to speak and says that it’s like 1973 out there when it comes to cloud computing data portability.

According to InfoWorld, Cerf said major cloud service providers like Amazon, Google and IBM have no real form of interoperability. Cerf spoke Thursday night at the Churchill Club in Menlo Park, Ca.

“We don’t have any inter-cloud standards,” Cerf said. “The current cloud situation is similar to the lack of communication and familiarity among computer networks in 1973.”

People will want to move data around. They may have multiple cloud service providers. They may want to use different cloud service providers as an interconnected network. Moreso, customers will simply want to move data from Cloud A to Cloud B.

Cerf went on to say that the industry needs to develop protocols and standards to make this all happen. It’s important to note that Google, Cerf’s employer, obviously has a stake in how this all pans out.

We went to Aardvark to ask about this issue.

What can you do right now to avoid getting locked into one cloud service provider?

Marc Limotte, director of engineering at Feeva Technology, writes:

“The obvious problem is that the difficulty in switching limits consumer choice and therefore competition. You can’t “vote with your feet”, if you can’t walk away.

This is common in IT, though. It’s never been easy to switch from one enterprise package to another, or from one hosting facility to another.

The data isn’t even the worst of the problem. In most cases, you can at least get an extract (even if it is terabytes of data), and perform a load in to some other system. The more complex issue is when you architect your solution to take advantage of a vendor’s proprietary services (e.g. the data store in Google App Engine, or the Amazon’s SQS). Not that you shouldn’t use these features… they’re useful, just be aware that they start to limit your options is you want to someday move away from that platform.

My suggestion… make sure you know how to export your data. And try and use your own interfaces in front of custom services. that way if you want to move, you just have to write an adapter, and not a complete re-architecture.”