While Google I/O 2016 didn’t feature any Larry Page sightings, it was a great look at some of the newest IoT-related developments inside Mountain View.

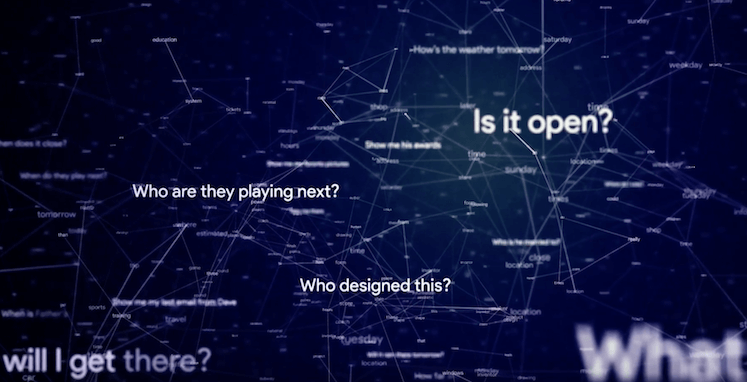

The firm has clearly begun to focus on machine learning and how interactions between AI and humans can be valuable for both parties.

Here is a roundup of what we think were the biggest IoT announcements:

Google Assistant

Intelligent assistants are all the rage these days, but Google wants a service that can compute more than a single query. Assistant is that service, a voice controlled assistant that uses context to understand the question and provide detailed follow up answers.

“We want users to have an ongoing, two way dialogue with Google Assistant,” said Google CEO, Sundar Pichai. “We want to help you get things done in the real world. And we want to do it for you, understand your context, letting you have control over it.”

Assistant borrows many of the features added to Now last year, like the ability to understand your questions using location, search, or other data available to Google. A user can ask “Who built this?” and Assistant will understand where you are and give you the architect’s name. You can press further with that same query, asking Google “Show me other things they’ve built”, and it understands your query is a follow up.

That is impressive, but that also worked (to an extent) on Now. What’s new is the automatic ordering options on Assistant. When you ask what’s on at the cinemas, Assistant will provide you with a list of movies, you can then ask to book the tickets and it will automatically purchase them. This works for ordering takeaway or booking a taxi as well, as long as the application supports Assistant.

That, as far as I can tell, is new.

Google Home

Google Home is the company’s attempt at competing with Amazon Echo. It has a similar cylinder shape, though not as tall as the Echo, and is essentially a hub for your smart home and an intelligent assistant.

See also: Google Home answers some of your more mundane questions

You can use Home to turn down the thermostat, turn on the TV, change the color of your Philips Hue Lights and even play some music on your Chromecast Audio.

Home doesn’t have a price tag yet, but will be available later this year.

Android N

Android N has been in developer preview for a few months, but the team had a few updates to make at I/O.

We’ll run through these quick. Android N will feature Vulcan, a new 3D graphics API that gives developers direct control of the GPU. It has less overhead than OpenGL and uses the same APIs for desktop and mobile. Android N will use the JIT Compiler, which is between 30 and 600 times faster than previous Android compilers (citation needed). Google says it installs apps 75 percent faster, reduce app code by 50 percent, improves performance and lowers battery consumption.

Android is getting serious about security, it has added file-based encryption, which apparently works better than block level for individual users. It is also providing hardened media framework security. From now on, Android will automatically update to the latest version, to make sure lazy people avoid older version attacks.

On the user side, Android N will add a clear all button on multitasking and a double tap takes you back to the most recently used app. Split-screen will also be available on mobile, tablet, and Android TV. Users will be able to send a direct reply to messages and can long tap a notification to block or silence it.

Android N will launch later this summer and Google is asking users to send name suggestions for the update.

Deep Neural Networks and Machine Learning

Google I/O 2016 was all about the company’s capabilities in these two fields. Pichai showed a team of engineers that used deep learning to teach robots how to pick up items properly, which looks like an early form of the future Walmart shopping assistant.

Pichai also mentioned deep learning that teaches robots how to spot early signs of diabetic retinopathy, one of the leading cases of blindness in the United States. That could be deployed onto smartphones in the future.

That Google car

While we sadly got no updates on the Google autonomous car during the keynote, it was at the event for people to look at. Sadly, no free rides were available and Google didn’t even take the car for a spin. No confirmation if it self-parked for event.