Autonomous vehicles grab a lot of headlines these days, with Audi announcing their plans, Tesla downloading new features, and Google’s first self-caused fender bender.

We caught up with David Miller – Chief Security Officer at IoT and identity cloud platform company, Covisint – at this week’s RSA Security Conference. We talked about the slow-moving future of fast-moving autonomous cars, the perils of hacking your ride and why your new sedan may age into obsolescence as quickly as an iPhone. (Part 1 of a 2 part series. Part 2 is here.)

What do you see as the key hacking risks around autonomous vehicles?

Miller: If you look at vehicle security – autonomous or not – what we have nowadays is you have the ability to start your car, or unlock your car (remotely) and how is it that I can keep that secure. But let’s be real, the worst that’s going to happen today is that someone’s going to steal your car – which isn’t great – but unless that person steals your car and runs someone over with it, people aren’t dying because of that (hack).

In the autonomous model, we’re talking about the ability to control the vehicle even if it’s not fully autonomous. I think we’re a long time away from cars that are driving down the road with no human being, no steering wheel, no driver. But we have semi-autonomous (technology) now, like adaptive cruise control. In my hybrid today, when you turn the steering wheel, you’re not actually actuating a real linkage. I’m turning something going to a computer that’s turning a motor that’s turning your wheels. If you get some (malware) in between there, the simple thing to do is what we do now on the internet, like a denial of service attack.

Everyone says ‘Well, I could make it turn into a ditch” (if hacked), but that’s actually hard because I’ve got to hack into and figure out all this stuff. But if I can get in and (compromise) its ability to send a message – a denial of service attack – the driver could try to turn all he wants to, but the car ain’t turning.

So what about the famous video of someone hacking a Jeep?

Miller:

That is real, and it was a bad design. They hacked into the infotainment system, then basically used it to be able to jump over to the command and control system, and then be able to issue commands – because the infotainment system is connected. They found the IP address on the (cell) network and sent commands to the piece of malware they put on the car to tell it to do things. It was a definitely a bad design, the infotainment system should have had a lot more controls. With a lot of original equipment manufacturers (OEMs) these days, the challenge is – except for Tesla, it seems – that they design their in-vehicle technology to just be powerful enough to do just what that vehicle is designed to do, because that’s cheaper.

The idea is that they’re not going to put in a whole ton of memory in there. (OEMs) can buy very small amounts of memory to hold exactly what they need to hold, so they can save $20 a vehicle.

The problem is that every system that’s embedded in the vehicle, you’re going end up finding some vulnerability, and you’re going to have to upgrade the system. And what you find happening is that you can’t upgrade the system. The fix takes up more space than allotted because we installed just enough memory.

When you said Tesla’s the exception, is that because they’re building from scratch?

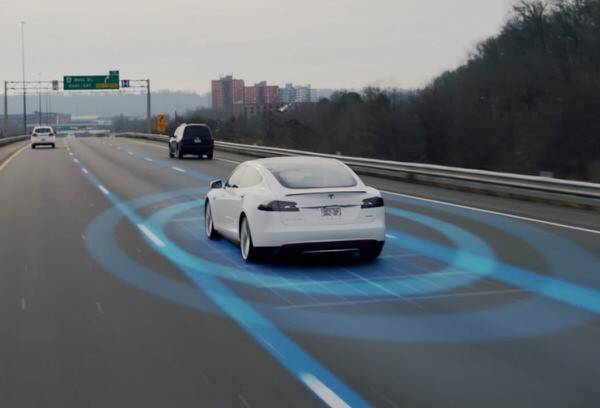

Miller: Tesla is taking a different point of view on their vehicles. They’re overdesigning them. They have huge, huge processors, they have tons of memory. They have sensors all over the place. That’s why they can simply download to add new features. That’s why they can add autonomous driving by download. They have over-engineered the vehicle from (a hardware) standpoint, so they can continually update it. Now, when you sell vehicles for $150,000, you can do that. But (Tesla founder Elon Musk) is trying to test out a point. The guys making $20-30,000 vehicles – the ones making millions of them, not 15,000 a year – they are building their vehicles exactly to spec. They have an update and they don’t want to do it. They want you to buy the next one.

So effectively a $20-30,000 car is disposable in 5 years or 7 years?

Miller: Yes, it’s designed to be disposable.

So if you find yourself needing a hardware upgrade….

The OEM’s attitude is yes, that’s what we want (for you to buy a new car). We sell cars, not computers. If I can add the feature over the air, you don’t have to buy a new car. I want you to see that, oh, the new car has all this new capability, so feel free to take your old car in (and trade up), but what everyone fails to take into account is that that old car will still be out there. It’s not like a cell phone where they’ll crush it, that old car is someone’s new car, and that old car still has the exact same vulnerabilities.

We’re going to live with a model where we’ll have these sorts of things, and with the history of cybersecurity, you have to go on the assumption that anything you build will be compromised. At some point in time, there will be some methodology that will take advantage of some vulnerability, some changes in technology. Something always comes up.

Given the way vehicles are designed, they’re not all connected. There’s a lot of cars out there that aren’t connected all the time. They’re connected when my phone is in (the cabin), but otherwise, that’s about it. But because of that what we think (of these risks), why not take the security decision making up to the cloud? I’m not saying you move it all to the cloud because the cloud is more secure – I make the argument the cloud will be hacked also. The difference is that I can update a cloud-based system. The model we’ve been talking to folks about is a model of using tokenization.

What you do is this – devices request permission to use things. Imagine a device in the vehicle that wants to be able to turn the heat up in the car. Then what happens is it goes to the cloud and says ‘I think I need to turn the heat up’ and the cloud says ‘oh, you’ve identified yourself so I’m going to go ahead and create an encryption action token, hand it back to you and allow you to use it to turn up the heat in the car.’

But I certainly don’t what to make it so that every time you turn your steering wheel, the car has to have an Internet connection to do anything. You can have tokens that can have a certain duration, that are good for a period of time. So the command and control systems identify themselves and say as long as the car is running, you have permission to do this myriad of things, feel free to play it again and again and again. When you turn the car off, that permission goes away. First off, it helps if someone steals your car, you can effectively disable the vehicle. And when the system is hacked – which we think it will be eventually – you only have to go to the cloud and fix there and you fix it once, and you don’t have to bring in a million cars (to be fixed). We think that’s the direction to go, that type of rules engine.