I suffer from severe data envy when it comes to LinkedIn. They have detailed information on millions of people who are motivated to keep their profiles up-to-date, collect a rich network of connections and have a strong desire from their users for more tools to help them in their professional lives. Over the past couple of years Chief Scientist DJ Patil has put together an impressive team of data scientists to deliver new services based around all that information.

One of my favorites is their career explorer, using the accumulated employment histories of millions of professionals to help students understand where their academic and early job choices might lead them.

Ali Imam’s connection network, via Russell Jurney

Their work also shows up in some more subtle ways too, like the Groups you may like service that relies on their analyses and the SNA team’s work to recommend groups that seem connected to your interests. This kind of recommendation technology is at the heart of a lot of their innovative tools, initially as the first company to create a “People you may know” service, but more recently their Jobs Recommendation work both for job-seekers and recruiters.

What I really wanted to know was how they dealt with that deluge of information, so I sat down with Peter Skomoroch of their data team to understand the tools and techniques they use. The workhorse of their pipeline is Hadoop, with a large cluster running a mixture of Solaris and CentOS. Jay Kreps has put together a great video and slides on LinkedIn’s approach to the system, but Peter tells me they’re big fans of Pig for creating their jobs, with snippets of Python for some of the messier integration code.

Before he joined LinkedIn, he frequently used SQL for querying and exploring his data, but these days he’s almost exclusively using lightweight MapReduce scripts to do that sort of processing. To control the running of Hadoop jobs, they’ve recently open-sourced their internal batch-processing framework Azkaban which acts as the circus ringmaster for all of the processes people throughout the company need the cluster to run, both one-offs and recurring tasks.

That takes care of pulling in data, but how do you handle getting it somewhere useful once the job is finished? They have created a custom Hadoop step that they can insert to push the information directly to their production Voldemort database. That sounded a bit scary to me, so I asked Peter how they dealt with mistakes that pushed bad data onto the site. That’s where Voldemort’s versioning saves the day apparently, letting them easily roll back to a previous version before any erroneous changes.

The secret ingredient that most surprised me about their pipeline was Peter’s use of Mechanical Turk. A lot of their headaches come from the fact that users are allowed to enter free-form text into all the fields, so figuring out that strings like “I.B.M.”, “IBM” and “IBM UK” are all the same company can be a real challenge. You can get a long way with clever algorithms, but Peter told me what when these sort of recognition problems get too hairy, he reaches for his ‘algorithmic Swiss Army knife’: the human brain-power of thousands of Turks.

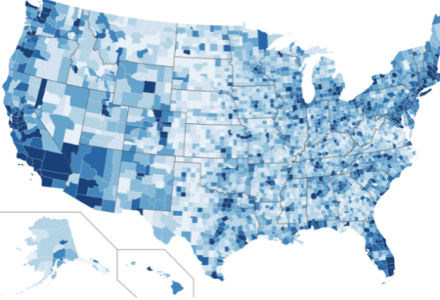

As an example, he described how he used the workers to solve the problem of recognizing the place names that Twitter users put into the location field. By asking the Turks to find the places he couldn’t understand with an algorithm, he was able to create a much richer view of the user’s locations than a simple API could offer. That project’s reliance on GeoNames led me to wonder about their use of public data sources. Peter is hooked on the Wikipedia dumps, especially for spotting co-occurrences of related words and n-gram frequencies.

Twitter users by location, thanks to Mechanical Turk matching

LinkedIn is now over seven years old, and has demands on its systems that dwarf most startups technical problems, but it’s been trying to pick up a few tricks from the early-stage world. In particular JRuby has been a godsend, letting them easily create front ends for the services their back-end data processing makes possible, and the LinkedIn team integrate them seamlessly into their existing Java infrastructure. It’s not just the tools that Ruby provides, it’s the fact they can tap into a large pool of developers familiar with the technology.

One funky little project he mentioned that I hadn’t run across is Scalatra, a tiny Scala framework designed to work like Ruby’s Sinatra. Other open-source projects that get an honorable mention are Protovis for Web graphs and charts, Tweepy for interacting with the Twitter API and Gephi for visualizing complex networks. They use Gephi heavily for exploring their networks, and they’re such big fans they recently hired the project’s co-founder, Mathieu Bastian.

Since they rely so heavily on open-source software, they’re keen to contribute back to the community. Voldemort, invented by Jay Kreps, is probably their best-known project, but they put all of their open-source components up on SNA-Projects.com. As the hire of Mathieu shows, this isn’t pure altruism – they benefit from access to a community of developers for new hires, and Peter asked me to mention they are keen on finding more Rubydevelopers to join their data team. I kept catching glimpses of the unreleased tools they’re working on; they have an ambitious pipeline of upcoming releases, so they need all the engineering help they can find.

Hearing about all the fun they’re having (and the results they’re achieving) did nothing to help my data envy, but it did leave me with lots of ideas for improving my own processing pipeline. What are your favorite data processing tools for this sort of work? What should LinkedIn be looking at? Let me know in the comments.