Earlier this week, Mark Zuckerberg claimed that Facebook’s recent privacy changes were not nefarious, but rather an unselfish pursuit of “a concept called data portability.”

As the one of the people who popularized that concept in relation to social networks, and as a founding member of the organization representing that cause, I’d like to call bullshit on that.

Guest author Chris Saad is VP of strategy at Echo, a leading provider of comment/conversation technology to Tier 1 publishers. His role is to track trends in the marketplace, listen to and participate in the community and translate those needs into actionable product direction. His background includes co-authoring the Synaptic Web strawman , co-authoring the Attention Profiling Markup Language (APML) specification, and co-founding the DataPortability Project. The DataPortability project’s mission is to advocate interoperable data portability for users, developers and vendors.

“The lack of honesty and clarity from the company and its representatives … and the continued trend of taking established language – such as “open technology” or “data portability” – and corrupting it for its own marketing purposes, is far more disconcerting than the boundaries it’s pushing with its technology choices.”

Until now I have stayed largely silent on the privacy hoopla because data portability and the open Web are not strictly related to privacy – at least in the sense that things don’t need to be public for them to be portable or interoperable.

For example, just because the Web is based on open technologies (HTTP, HTML, SSL, JavaScript, etc.), it does not mean using your credit card on a properly configured website is public or unsafe. Sending email from one person to another does not mean third party websites can now suddenly “instantly personalize” their recommendations to you based on keywords found in your inbox.

Despite being based on interoperable technologies, these transactions remain private and secure.

Advocating Open Technologies Is Not Promoting the Death of Secrets

In the face of this, however, Mark Zuckerberg and Facebook continue to (deliberately?) confuse the idea of open technologies with “sharing in public.” The attempt to correlate the two things is at best misinformed and at worst dishonest.

With his latest statement, Zuckerberg and Facebook are now going so far as to declare their privacy missteps as “data portability.” Actually, Facebook’s changes have nothing to do with data portability. In fact, the root of the user backlash has nothing to do with what the company is doing but rather how its are doing it.

Its problem is that, as a service, Facebook started as a place for people to share with friends and family in a private setting. Users expected privacy. This expectation is referred to as a “social compact.” It is an implied agreement that has less to do with the terms of service and more to do with user expectations and ethics. When I give you my business card, for example, I expect (through our implied social compact) that you won’t give it to spammers.

It turns out, however, that this compact was good for users but not great for Facebook’s business. There are two broad reasons why Facebook has felt forced to make the service more public.

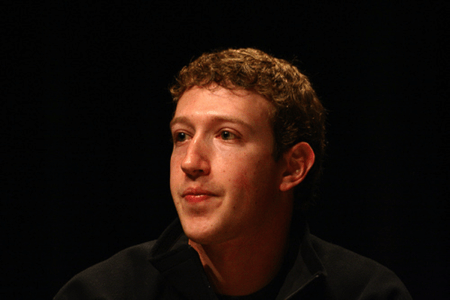

Mark Zuckerberg Facebook SXSWi 2008. Photo by deneyterrio.

First, it’s hard, if not impossible, to monetize private communication. People don’t use those kinds of service with the intent to buy, but rather with the intent to communicate. Intention is critical when it comes to advertising and e-commerce.

Second, competition from services like Twitter have made it cool to be public, and it’s finding interesting ways to monetize this public information (the least of which is selling its inventory of Tweets for $15 million a pop).

Most of Facebook’s very mainstream users, however, still just want a private place to keep up with their friends and family. In short, the economic interests of the service are not in line with the interests of its users. Despite this, Facebook has been forced to smashed big cracks in its privacy blanket and started forcing its users, en mass, to adopt more transparent and public online personas.

This (now public) data can be used by advertisers, publishers and other third parties to help Facebook attract even more users, more data and ultimately more dollars through targeted ads and micro-transactions.

The Wrong Social Compact

The problem, then, is not Mark Zuckerberg’s stated goal of making the world a more open (read, less private) place, but rather that Facebook did not initially establish the right social compact – promise – to its users to justify its role in this vision of public sharing.

As a result, users feel (rightly) violated. Facebook broke its promise for business purposes. And this is not the first – or last I suspect – time it will do it. (Remember Beacon?)

Finally – in regards to actual data portability, interoperability and the Web – the technology choices Facebook makes are anything but open. It uses proprietary technologies, protocols and formats to capture value from the Web and lock it up in its hub.

In short, nothing about its cultural or technological approach is open or interoperable; it has nothing to do with interoperable data portability – the only kind that matters.

Facebook has every right to do whatever it likes with its service. The market will decide if it continues to like the service or not. Any backlash from the media, or demands for more fairness, are largely irrelevant unless users vote with their feet and stop using the service. Facebook knows this is unlikely, though, given its deep (and growing) integration with the rest of the Web.

But claiming that users love the changes because more and more of them are stumbling into the service by way of widgets on publisher pages is dishonest. There is a real fear amongst the user base (and their partners) about these changes.

When it comes right down to it, the lack of honesty and clarity from the company and its representatives about these issues, and the continued trend of taking established language – such as “open technology” or “data portability” – and corrupting it for its own marketing purposes, is far more disconcerting than the boundaries it’s pushing with its technology choices.

What Are the Next Steps?

We as responsible members of the technology community and the open web must be clear and honest about what we see – and any threat it might pose to our industry or the wider world. While jumping on the bandwagon might be fun and easy (and even profitable), it is a abjection of our own responsibilities.

So what can Facebook do in the face of this criticism and push-back?

- Declare clearly and unequivocally that its service has changed from a private place for sharing to a tool for public publishing.

- Go beyond what it has already done to correct the issue and provide a giant status indicator on the top right of a user’s profile page indicating if they are in one of three modes: Public, Private, or Friends and Family only.

- Alternatively, (although highly unlikely) it can change its business model from one based on ads and publishers and to one that’s based on charging users for pro services in order to align its economic interest with those of its users.

What can others do to protect their privacy or capitalize on Facebook’s faults?

- Right now: Recognize that Facebook has violated user trust over and over for the sake of its business model, and will do it again. Stop sharing private information with the service.

- Short term: Create a properly private sharing network where people can feel safe to be with their friends and family.

- Medium term: Recognize (or decide to ensure) that Facebook is only one service, and in order to maintain and encourage competition and respect in the marketplace, other smaller (and not-so-small) players must be supported when making technology decisions (i.e. publishers must choose cross-platform tools and technologies).

- Long term: Continue to create an open alternative to Facebook whereby the Web is the platform and users can choose the applications that make sense for them, which includes privacy.

- Forever: Understand the difference between an “interoperable, open Web” and “Death of Privacy” – they are not the same thing.

Next week The DataPortability Project will be announcing a new initiative that will improve communications between Web services and users – stay tuned.